Understanding Your Organization’s AI Maturity: A Roadmap to Transformation

Deep learning—a subfield of machine learning concerned with algorithms inspired by the structure and function of the brain and behind many exciting technology trends like robotics and image recognition—is hugely popular. According to some experts, deep learning is the fastest growing area in artificial intelligence, and its full power is as yet unknown. Currently, deep learning is part of the technology in applications areas such as autonomous automobiles, smart personal assistants, and precision medicine. However, as they say, the sky is the limit.

Given that the potential of deep learning is immense, it is hardly surprising that the demand for people with expertise and skills in this area is increasing. IT professionals looking to expand their skillsets and familiar with Python would do well to become familiar with Keras. Keras is a powerful and free open-source Python library that is particularly attractive. Keras can be used with Theano and TensorFlow to build almost any sort of deep learning model.

Let’s talk about Keras

This introduction to Keras is an extract from the best-selling Deep Learning with Python by François Chollet and published by Manning Publications. The book introduces the reader to the field of deep learning and builds your understanding through intuitive explanations and practical examples.

Keras has the following key features:

Keras is distributed under the permissive MIT license, which means it can be freely used in commercial projects. It’s compatible with any version of Python from 2.7 to 3.5. Its documentation is available at keras.io.

Keras has over 50,000 users, ranging from academic researchers and engineers, at both start-ups and large companies, to graduate students and hobbyists. Keras is used at Google, Netflix, Yelp, CERN, at dozens of start-ups working on a wide range of problems (even a self-driving start-up: Comma.ai).

Keras, TensorFlow, and Theano

Keras is a model-level library, providing high-level building blocks for developing deep learning models. It doesn’t handle low-level operations such as tensor manipulation and differentiation. Instead, it relies on a specialized, well-optimized tensor library to do that, serving as the “backend engine” of Keras. Rather than picking a single tensor library and making the implementation of Keras tied to that library, Keras handles the problem in a modular way, and several different backend engines can be plugged seamlessly into Keras. Currently, the two existing backend implementations are the TensorFlow backend and the Theano backend. In the future, it’s possible that Keras will be extended to work with even more engines, if new ones come out that offer advantages over TensorFlow and Theano.

TensorFlow and Theano are two of the fundamental platforms for deep learning today. Theano is developed by the MILA lab at Universite de Montreal, and TensorFlow is developed by Google. Any piece of code written with Keras can be run with TensorFlow or with Theano without having to change anything: you can seamlessly switch between the two during development, which often proves useful, for instance if one of the two engines proves to be faster for a specific task. Via TensorFlow (or Theano), Keras is able to run on both CPU and GPU seamlessly.

When running on CPU, TensorFlow is wrapping a low-level library for tensor operations called Eigen. On GPU, TensorFlow wraps a library of well-optimized deep learning operations called cuDNN, developed by NVIDIA.

Developing with Keras: a quick overview

The typical Keras workflow looks like our example:

You can define a model two ways: using the Sequential class (only for linear stacks of layers, which is the most common network architecture by far), and the “functional API” (for directed acyclic graphs of layers, allowing to build completely arbitrary architectures).

As an example, here’s a two-layer model defined using the Sequential class (note that we’re passing the expected shape of the input data to the first layer):

Listing 1 A network definition using the Sequential model

from keras.models import Sequential

from keras.layers import Dense

model = Sequential()

model.add(Dense(32, activation='relu', input_shape=(784,)))

model.add(Dense(10, activation='softmax'))

And here’s the same model defined using the functional API. With this API, you’re manipulating the data tensor that the model processes, and applying layers to this tensor as if they were functions. A detailed guide to what you can with the functional API can be found in the book itself.

Listing 2 A network definition using the function API

from keras.models import Model

from keras.layers import Dense, Input

input_tensor = Input(shape=(784,))

x = Dense(32, activation='relu')(input_tensor)

output_tensor = Dense(10, activation='softmax')(x)

model = Model(input=input_tensor, output=output_tensor)

Once your model architecture is defined, it doesn’t matter whether you used a Sequential model or the functional API: all steps are the same. The learning process is configured at the “compilation” step, where you specify the optimizer and loss function(s), which the model should use, as well as the metrics you want to monitor during training. Here’s an example with a single loss function, by far the most common case:

Listing 3 Defining a loss function and an optimizer

from keras.optimizers import RMSprop

model.compile(optimizer=RMSprop(lr=0.001), loss='mse', metrics=['accuracy'])

Lastly, the learning process itself consists in passing Numpy arrays of input data (and the corresponding target data) to the model via the fit() method, similar to what you’d do in Scikit-Learn or several other machine learning libraries:

Listing 4 Training a model

model.fit(input_tensor, target_tensor, batch_size=128, nb_epochs=10)

Want to learn more about Keras?

You can start by watching this short video

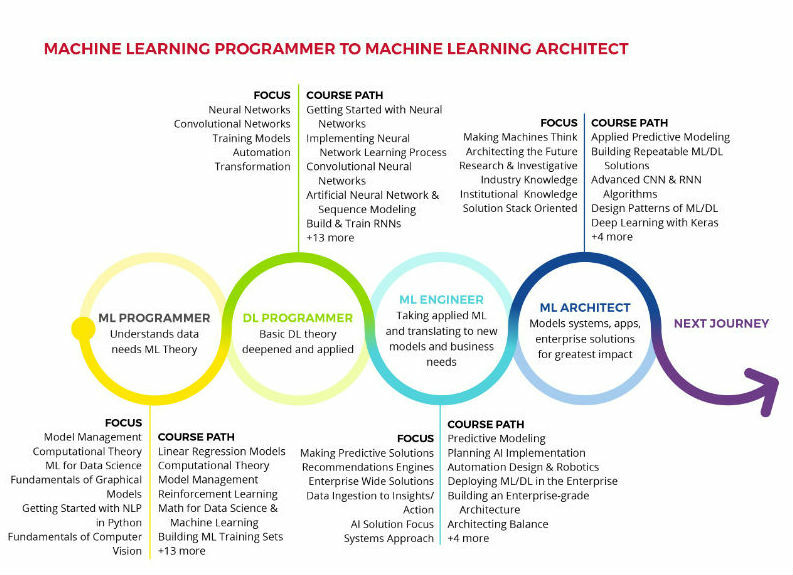

This course is part of the Machine Learning Programmer to Machine Learning Architect Skillsoft Aspire journey.

Also Read: Top 5 Reasons Why Python Is So Popular

Skillsoft is partnering with Manning Publications, a leading publisher of technology and developer content, to deliver more than 250 current and backlist Manning titles to Skillsoft customers. Manning’s bestsellers include titles across a wide range of languages, technologies, and new tech, including Kubernetes in Action, Deep Learning with Python, Java 8 in Action, Grokking Algorithms, Spring Microservices in Action, Serverless Architectures on AWS, Functional Programming in C#, and Deep Learning with R. Manning’s highly respected content on foundational technology topics and emerging areas greatly expands Skillsoft Tech & Developer book offerings and enhances our Skillsoft Aspire learning journeys. Manning will add new titles to Skillsoft Books on a monthly basis.

François Chollet works on deep learning at Google in Mountain View, CA. He is the creator of the Keras deep-learning library, as well as a contributor to the TensorFlow machine-learning framework. He also does deep-learning research, with a focus on computer vision and the application of machine learning to formal reasoning. His papers have been published at major conferences in the field, including the Conference on Computer Vision and Pattern Recognition (CVPR), the Conference and Workshop on Neural Information Processing Systems (NIPS), the International Conference on Learning Representations (ICLR), and others.

We will email when we make a new post in your interest area.